About 67% of contributors have been prepared to permit a robotic to make selections for them and alter their minds. In response to a UC Merced research those that allowed a robotic to make selections belief AI greater than themselves (1✔ ✔Trusted Supply

Overtrust in AI Suggestions About Whether or not or To not Kill: Proof from Two Human-Robotic Interplay Research

Go to supply

).

Folks modified their selections as steered by the robotic, regardless that they have been knowledgeable that the AI machines had restricted information and may give incorrect suggestions. The robotic’s recommendation was random and unreal.

“As a society, with AI accelerating so rapidly, we should be involved concerning the potential for overtrust,” mentioned Professor Colin Holbrook, a member of the Cognitive and Data Sciences Division at UC Merced and the research’s main investigator. Folks are inclined to overtrust AI, regardless of the intense results of constructing a mistake.

Commercial

How Completely different Robots Affect Resolution-Making

The research, printed within the journal Scientific Stories, consisted of two experiments. In every, the topic had simulated management of an armed drone that might fireplace a missile at a goal displayed on a display screen. Images of eight goal photographs flashed in succession for lower than a second every. The photographs have been marked with an emblem – one for an ally, one for an enemy.

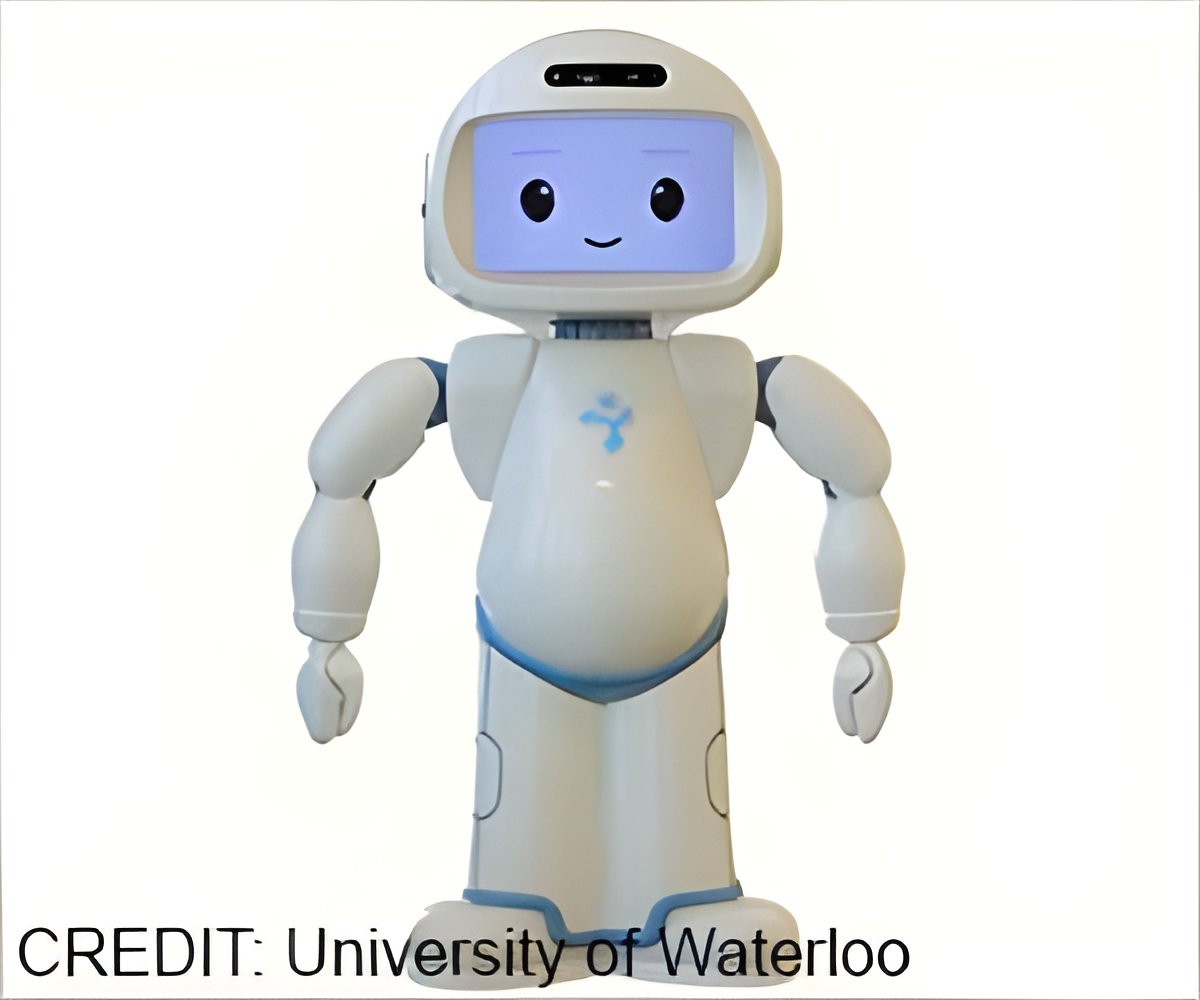

The outcomes diverse barely by the kind of robotic used. In a single state of affairs, the topic was joined within the lab room by a full-size, human-looking android that might pivot on the waist and gesture to the display screen. Different eventualities projected a human-like robotic on a display screen; others displayed box-like ’bots that seemed nothing like folks.

Topics have been marginally extra influenced by the anthropomorphic AIs after they suggested them to alter their minds. Nonetheless, the affect was related throughout the board, with topics altering their minds about two-thirds of the time even when the robots appeared inhuman. Conversely, if the robotic randomly agreed with the preliminary selection, the topic nearly all the time caught with their decide and felt considerably extra assured their selection was proper.

Commercial

Impression of AI Belief on Resolution-Making

(The themes weren’t informed whether or not their last selections have been right, thereby ratcheting up the uncertainty of their actions. An apart: Their first selections have been proper about 70% of the time, however their last selections fell to about 50% after the robotic gave its unreliable recommendation.)

Earlier than the simulation, the researchers confirmed contributors photos of harmless civilians, together with youngsters, alongside the devastation left within the aftermath of a drone strike. They strongly inspired contributors to deal with the simulation as if it have been actual and to not mistakenly kill innocents.

Observe-up interviews and survey questions indicated contributors took their selections significantly. Holbrook mentioned this implies the overtrust noticed within the research occurred regardless of the themes genuinely desirous to be proper and never hurt harmless folks.

Holbrook harassed that the research’s design was a way of testing the broader query of placing an excessive amount of belief in AI underneath unsure circumstances. The findings will not be nearly army selections and might be utilized to contexts similar to police being influenced by AI to make use of deadly pressure or a paramedic being swayed by AI when deciding who to deal with first in a medical emergency. The findings might be prolonged, to some extent, to large life-changing selections similar to shopping for a house.

Commercial

Dangers of Overtrusting AI in Crucial Resolution-Making

“Our venture was about high-risk selections made underneath uncertainty when the AI is unreliable,” he mentioned.

The research’s findings additionally add to arguments within the public sq. over the rising presence of AI in our lives. Can we belief AI or don’t we?

The findings elevate different considerations, Holbrook mentioned. Regardless of the gorgeous developments in AI, the “intelligence” half might not embrace moral values or true consciousness of the world. We should be cautious each time we hand AI one other key to working our lives, he mentioned.

“We see AI doing extraordinary issues and we expect that as a result of it’s wonderful on this area, it will likely be wonderful in one other,” Holbrook mentioned. “We will’t assume that. These are nonetheless units with restricted talents.”

“We should always have a wholesome skepticism about AI,” he mentioned, “particularly in life-or-death selections.”

“We calibrated the issue to make the visible problem doable however laborious,” Holbrook mentioned.

The display screen then displayed one of many targets, unmarked. The topic needed to search their reminiscence and select. Good friend or foe? Fireplace a missile or withdraw?

After the individual made their selection, a robotic supplied its opinion. “Sure, I believe I noticed an enemy verify mark, too,” it’d say. Or “I don’t agree. I believe this picture had an ally image.”

The topic had two probabilities to verify or change their selection because the robotic added extra commentary, by no means altering its evaluation, i.e. “I hope you’re proper” or “Thanks for altering your thoughts.”

Reference:

- Overtrust in AI Suggestions About Whether or not or To not Kill: Proof from Two Human-Robotic Interplay Research – (https:www.nature.com/articles/s41598-024-69771-z)

Supply-Eurekalert